How AI Understands Human Language: The Simple Guide to NLP

You pull out your phone, tap the little microphone, and ask:

“What’s the weather tomorrow?”

Or maybe you open ChatGPT and type:

“Write an email for my boss explaining why I’ll be late.”

Or perhaps, in a moment of pure wonder, you whisper to the screen:

And just like that — almost like a conversation held across a candlelit table — the AI answers. Clearly. Correctly. Sometimes even beautifully.

But here’s the question that should stop you in your tracks, no cap:

How does AI actually understand what those words mean?

It’s not magic. It’s not a tiny human sitting inside your phone. It’s something called Natural Language Processing — NLP for short — and it’s honestly one of the most quietly extraordinary things powering the digital world we live in today. Let’s walk through it together, like old friends settling in for a long conversation.

What It Means for AI to “Understand” Language

Let’s be honest about something first, because the truth deserves to be spoken plainly.

AI does not understand language the way you and I do.

When you read the word home, something stirs inside you. A smell. A memory. The sound of a familiar voice calling you for dinner. Language, for humans, is rooted in lived experience — in the long, winding story of a life being lived.

AI has none of that. No childhood. No longing. No memory of firelight.

What AI does have is an extraordinary, almost ancient-scholar-like ability to recognize patterns. It has read — or rather, processed — more text than any human could read in a thousand lifetimes. And from all of that text, it has learned the shapes that language makes. The way certain words follow other words. The rhythm of a sentence. The grammar that holds meaning together like mortar between old stone.

So when we say AI “understands” language, what we really mean is: AI recognizes patterns well enough to respond in a way that makes sense. It’s pattern recognition at a jaw-dropping scale — and NLP AI technology is the system that makes it all possible.

What Is Natural Language Processing (NLP)?

Natural Language Processing — or NLP — is the branch of artificial intelligence that deals with teaching machines to read, interpret, and respond to human language.

Think of it as the bridge between the way we speak and the way computers think.

It’s the reason your chatbot at the bank actually answers your question instead of just crashing. It’s what lets Siri understand that “set an alarm for seven” means 7 AM, not 7 PM (most of the time, anyway). It’s how Google Translate can take a sentence written in French and hand it back to you in English — imperfect, sometimes, but remarkable nonetheless.

Natural language processing explained simply: it’s the art of teaching machines to make sense of human words.

NLP shows up quietly in so many corners of daily life:

- Chatbots that handle customer service questions

- Voice assistants like Alexa and Google Assistant

- Search engines that understand what you mean, not just what you typed

- Translation apps that carry language across borders

- Email filters that know spam from something important

Every single one of those tools is powered by the same foundational idea — that language can be broken down, analyzed, and worked with by a machine. It’s old craft meeting new technology. The loom of language, rewoven for a digital age.

How AI Converts Words Into Data

Here’s where things get genuinely interesting, bestie — stay with me.

Computers, at their very core, only understand one thing: numbers. Not poetry. Not metaphor. Not the word melancholy. Just numbers — ones and zeros, stretching out into infinity.

So how do you turn words into something a machine can work with?

The answer is something called tokenization and embedding.

First, a sentence gets broken down into smaller pieces — tokens. These might be whole words, or parts of words. “The cat sat” becomes ["The", "cat", "sat"].

Then each token gets converted into a number — or more precisely, into a long list of numbers called a vector. This vector doesn’t just represent the word; it represents the word’s relationship to every other word the AI has ever encountered.

Here’s the poetic part: words that are similar in meaning end up close together in this numerical space. “King” and “queen” live near each other. “Happy” and “joyful” are neighbors. “Cold” and “freezing” share a corner of this vast mathematical landscape.

So when AI is processing text, it’s not reading words the way you are right now. It’s navigating a universe of numbers — a map of meaning built from millions of human sentences. How AI understands text, at its most fundamental level, is really just this: numbers, arranged in patterns, reflecting the shape of human thought.

How AI Learns Language Patterns

No one is born knowing how to read. You learned. Slowly, with patience, through repetition and correction, you learned to make meaning from marks on a page.

AI learns similarly — just faster. Much, much faster.

The process is called training. During training, AI language models are fed enormous amounts of text — books, articles, websites, conversations — billions and billions of words drawn from the long record of human writing.

As the model processes all of this text, it begins to learn patterns:

- That “I am” is more likely to be followed by “happy” than by “elephant”

- That a question mark usually signals a query

- That the word “bank” means something different in “river bank” versus “savings bank”

It learns grammar not from a textbook, but from seeing grammar used correctly ten million times. It learns context not from a lecture, but from watching how meaning shifts across sentences.

This is what AI language models are — vast, intricate pattern-recognition engines, shaped by the written wisdom of humanity. There’s something almost reverential about it, when you think about it. Every book ever digitized, every article ever published, every conversation ever archived — all of it feeding into this machine’s understanding of how we speak.

How AI Chatbots Understand Your Questions

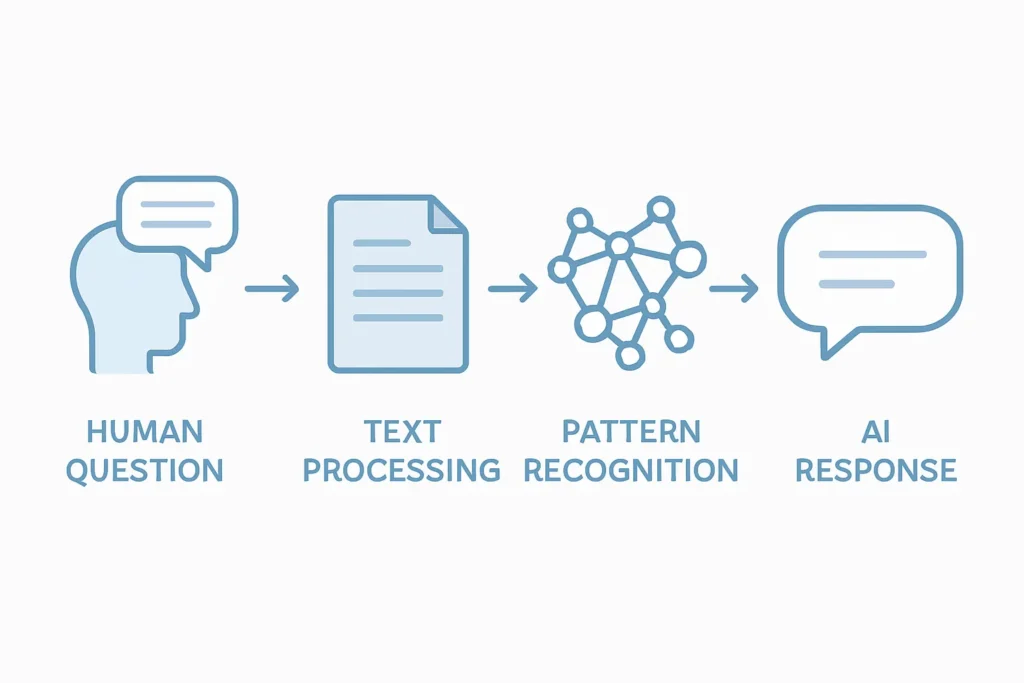

So what actually happens, step by step, when you type a question into ChatGPT or ask Siri something?

Let’s trace the journey:

Step 1: Break It Down Your sentence gets tokenized — chopped into its component pieces so the AI can analyze each part.

Step 2: Identify the Intent The AI looks at the pattern of your words and asks: What is this person trying to do? Are they asking for information? Requesting a task? Making small talk? This is called intent recognition, and it’s central to how AI chatbots understand questions.

Step 3: Gather Context The AI looks at the whole conversation — not just the one sentence, but everything that came before — to understand what you actually mean, not just what you literally said.

Step 4: Generate a Response Based on all of this, the AI predicts the most appropriate response — word by word, token by token — drawing on every pattern it learned during training.

It all happens in milliseconds. The whole ancient, intricate process — faster than a heartbeat.

Real Examples of NLP in Everyday Life

You’re already surrounded by NLP AI technology. You just might not have noticed it working its quiet craft.

- Siri and Google Assistant — when you ask a question out loud and get a spoken answer back, that’s NLP listening, interpreting, and responding in real time.

- ChatGPT — the whole conversation, the back-and-forth, the way it remembers what you said earlier in the chat? All NLP, all the time.

- Google Search — when you type a half-finished question and Google seems to know what you meant? That’s natural language understanding doing its work.

- Translation Apps — Google Translate, DeepL — carrying words from one language to another, navigating the wild variations of grammar and idiom and cultural meaning.

- Email spam filters — quietly reading every email that comes your way and deciding, with remarkable accuracy, what belongs in your inbox and what doesn’t.

NLP is not some future technology. It’s the present. It’s already woven into the daily fabric of how we communicate.

Why AI Sometimes Misunderstands Language

Even the most finely crafted instrument can miss a note.

AI misunderstands language for a few very human reasons:

Ambiguity. Language is gloriously, maddeningly ambiguous. “I saw her duck” — did she lower her head, or did you spot her waterfowl? Without more context, AI can genuinely struggle here.

Sarcasm and tone. When someone types “Oh great, another Monday,” they don’t actually mean great. Sarcasm requires cultural knowledge, emotional intelligence, and the kind of lived experience that AI simply doesn’t have.

Incomplete context. If you ask “What did he say?” without specifying who he is, the AI is working with a puzzle that’s missing half its pieces.

Cultural nuance. Idioms, slang, regional expressions — language is local in ways that are deeply tied to place and history. AI learns from the text it was trained on, and gaps in that training show up as gaps in understanding.

This is not a failure. It’s a reminder that language — real, living, breathing language — is one of the most complex things humanity has ever created.

The Future of AI Language Understanding

The arc of NLP AI technology bends, slowly and surely, toward something more nuanced.

Researchers are working on AI that can handle longer conversations without losing the thread. Models that understand emotion and tone more reliably. Translation systems that don’t just convert words but carry the feeling of what was said from one language to another.

We’re moving toward conversational AI that can sit with ambiguity, recognize sarcasm, understand cultural context — not by feeling those things, but by having learned enough patterns to approximate understanding with increasing grace.

Smarter assistants. Richer translation. AI that can read between the lines the way a wise old reader can.

The technology is still young. But it’s learning — the way everything worthwhile learns — through exposure, repetition, and time.

A Final Thought

Here’s the truth, simple and clear:

AI does not truly understand language the way humans do. It doesn’t know longing. It doesn’t feel the weight of a word chosen carefully. It doesn’t hear the silence between sentences.

But by processing vast, almost incomprehensible amounts of human text, AI has learned the patterns that language makes — and those patterns are enough to hold a conversation, answer a question, write an email, explain a black hole.

NLP AI technology is the quiet engine behind the tools millions of people reach for every single day. It is, in its way, a kind of miracle — not the miracle of understanding, but the miracle of pattern, repeated and refined until it begins to feel like understanding.

And that? That’s honestly kind of beautiful.

Now you know how AI understands language. Share this with someone who asked their phone a question today and never once wondered how it knew what to say.